The Case for Machines That Can Explain Themselves

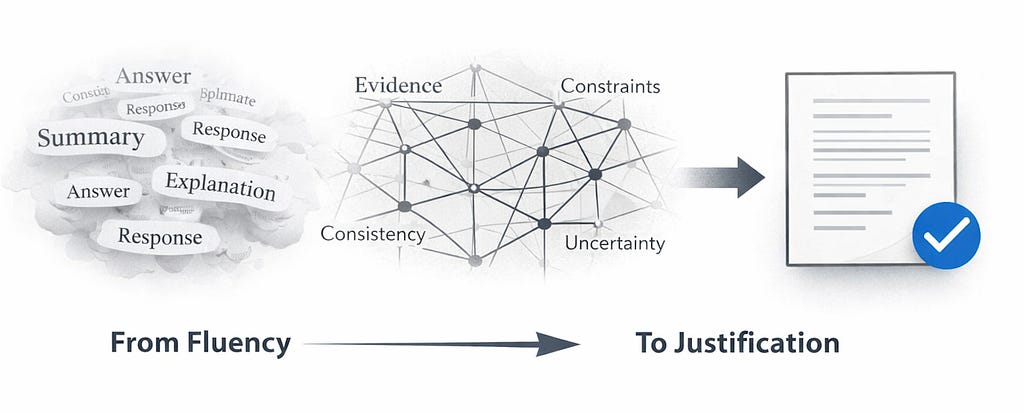

When fluency is no longer enough, justification must become the governing principle of computational reasoning.

Editor’s note (Dec 19, 2025): An earlier version of this essay displayed non-public citation placeholders from my drafting workflow, which rendered as broken links. I’ve replaced them with a verifiable reference list (primary sources where possible) and added a short change log below. The argument is unchanged.

For a piece about justification, every claim here should be traceable to a checkable source.

Modern systems now write with a proficiency that would have been unthinkable a decade ago. Their fluency, however, has created a misplaced sense of security. Today, polished text reveals little about the soundness of the reasoning behind it. The question is no longer whether a system can produce an answer, but whether the answer can withstand examination.

This is not conjecture. Across medicine, finance, scientific inquiry, and legal analysis, empirical findings point to the same pattern. Clinical summarization systems introduce subtle — but consequential — distortions in patient information while sounding fully authoritative¹². Legal evaluations show arguments supported by citations to cases that do not exist³. Factual audits reveal confident answers grounded in no evidence at all⁴⁵. Large-scale usage studies demonstrate that these issues surface not in dramatic failures but in quiet inconsistencies woven into everyday interactions⁶.

What emerges from this research is straightforward: these systems are skilled at extending a line of discourse and far less capable of interrogating it. They reproduce the surface of expertise while missing the internal scaffolding that makes expertise trustworthy.

This is a familiar problem for anyone who has built or governed large-scale analytical systems in environments where accountability is non-negotiable. In regulated domains, the most serious failures are not mathematical missteps but reasoning gaps — places where an output looks credible but lacks the evidentiary chain required for audit, compliance, or institutional trust.

Retrieval provides some grounding, but it rarely goes far enough. Even with the right documents in hand, models often misinterpret their contents, generalize too broadly, or ignore conflicting information⁷⁸. Having evidence is not the same as using it well.

Confidence adds a different kind of difficulty. Models frequently express certainty where none is warranted⁵. Users, in turn, tend to trust answers more when accompanied by explanations — even when those explanations are not supported by evidence⁹. Apparent transparency can, paradoxically, deepen the underlying opacity.

Much of this can be traced to architecture. Contemporary systems begin with generation; evaluation, if it occurs, follows. Inquiry and expression collapse into a single act. The result is an answer whose form may persuade, even when its foundations would not.

Older philosophical perspectives help make sense of this. Popper argued that ideas gain legitimacy only when they are exposed to the possibility of being wrong. Polanyi emphasized that expertise involves an intuitive sensitivity to when a conclusion extends beyond its evidence. These traditions value discipline — logical, evidentiary, and epistemic — over eloquence.

Encouragingly, emerging research suggests a different direction. Verifier-guided generation treats answers as hypotheses that must earn their validity¹⁰. Uncertainty-aware methods examine whether retrieved evidence truly supports a claim¹¹. Hybrid approaches incorporate symbolic checks to reduce high-risk errors in sensitive domains¹². Multi-stage pipelines separate extraction, evaluation, and narrative, and consistently outperform single-pass generation¹³.

What ties these approaches together is a reversal of assumptions: generation becomes the final step in a chain that begins with assessment, evidence gathering, constraint application, and uncertainty evaluation.

This shift matters because these systems are being woven into decision processes across society — in underwriting, scientific synthesis, regulatory analysis, and enterprise operations. These domains do not rest on eloquence. They rest on justification, reproducibility, and the capacity to withstand challenge.

We once asked whether a machine could write. The more pressing question now is whether a machine can explain — and whether it can remain silent when explanation is not possible.

Only systems capable of justifying their conclusions will deserve the trust that fluency alone once seemed to promise.

Endnotes

¹ ChatGPT makes medicine easy to swallow: an exploratory case study on simplified radiology reports — Jeblick et al., European Radiology (2023).

² Adapted Large Language Models Can Outperform Medical Experts in Clinical Text Summarization — Van Veen et al. (2023).

³ Large Legal Fictions: Profiling Legal Hallucinations in Large Language Models — Dahl, Magesh, Suzgun, Ho (2024).

⁴ TruthfulQA: Measuring How Models Mimic Human Falsehoods — Lin et al. (2021/2022).

⁵ Language Models (Mostly) Know What They Know — Kadavath et al. (2022).

⁶ Evaluating base and retrieval augmented LLMs with user-reported hallucinations in AI mobile apps reviews — Masanneck et al., Scientific Reports (2025).

⁷ Enabling Large Language Models to Generate Text with Citations — Gao et al., EMNLP (2023).

⁸ Synchronous Faithfulness Monitoring for Trustworthy Retrieval-Augmented Generation — Wu et al., EMNLP (2024).

⁹ Language Models Don’t Always Say What They Think: Unfaithful Explanations in Chain-of-Thought Prompting — Turpin et al., NeurIPS (2023).

¹⁰ Chain-of-Verification Reduces Hallucination in Large Language Models — Dhuliawala et al. (2023/2024).

¹¹ Faithfulness-Aware Uncertainty Quantification for Fact-Checking the Output of Retrieval Augmented Generation — Fadeeva et al. (2025).

¹² Proof of Thought: Neurosymbolic Program Synthesis allows Robust and Interpretable Reasoning — Ganguly et al. (2024).

¹³ FActScore: Fine-grained Atomic Evaluation of Factual Precision in Long Form Text Generation — Min et al., EMNLP (2023).

Change log

Dec 19, 2025: Corrected Endnotes (replaced broken/non-public citation placeholders with verified primary-source links).